67 integrated tools across 6 quality pillars. 12 scan profiles from 30-second pre-commit to full audit. A 6-stage finding enrichment pipeline that eliminates 95% of false positives. Cryptographic attestation with Ed25519 signatures and SLSA L3 provenance.

AI-generated code has measurable quality problems. Research consistently shows high rates of dead code, known vulnerabilities, and inconsistent patterns in AI output. The issue isn't that AI writes bad code — it's that AI writes code without the safety net of an integrated quality pipeline.

Running 67 individual tools manually for every change is impractical. Each tool has its own CLI, configuration format, output schema, and false positive characteristics. The industry needs a unified platform that orchestrates all these tools, intelligently triages findings through enrichment stages, and produces a single actionable quality score.

The code assurance platform solves this by treating code quality as an integrated pipeline rather than a checklist of disconnected tools.

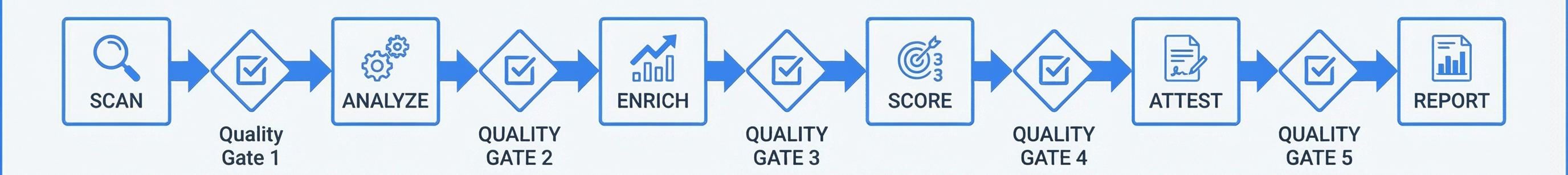

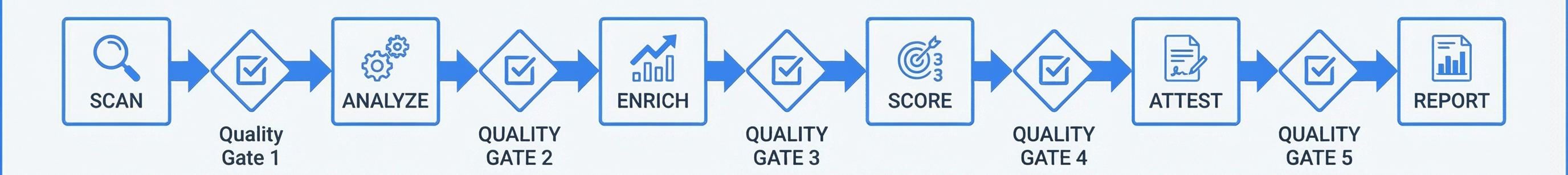

The platform organizes 67 tools into 6 quality pillars, runs them through configurable scan profiles, enriches findings through a 6-stage pipeline to eliminate false positives, and produces a 1000-point quality score with cryptographic attestation.

Source Code

↓

Scan Profile Selection (1 of 12 profiles)

↓

6 Pillar Execution (parallel tool runs)

├── Code Quality (18 tools)

├── Security (13 tools)

├── Testing (8 tools)

├── Performance (6 tools)

├── Supply Chain (8 tools)

└── Policy & Reporting (14 tools)

↓

6-Stage Finding Enrichment Pipeline

↓

1000-Point Quality Scoring

↓

Cryptographic Attestation (Ed25519 + Rekor + SLSA)

↓

Reports (PDF, HTML, JSON, SARIF, CSV, Executive Summary)10 custom AI analyzers: Dead code detection, naming consistency, cyclomatic complexity, coupling analysis, duplication detection, error handling patterns, type safety, documentation coverage, pattern consistency, dependency quality.

8 OSS tools: ESLint (JS/TS), Pylint (Python), Ruff (Python fast linting), Clippy (Rust), golangci-lint (Go), shellcheck (Shell), hadolint (Dockerfile), markdownlint (Markdown).

| Semgrep | SAST with custom rules for framework-specific patterns |

| Trivy | Container image + dependency vulnerability scanning |

| Bandit | Python-specific security analysis |

| Gitleaks | Secrets detection in code and git history |

| Bearer | Data flow analysis for sensitive data exposure |

| npm audit / pip-audit | Dependency vulnerability databases |

| OWASP Dependency-Check | CVE cross-referencing for all languages |

| Safety | Python known vulnerability database |

| detect-secrets | Entropy-based secret detection |

| TruffleHog | Historical secrets in git history |

| Checkov | Infrastructure-as-Code security scanning |

| Kubesec | Kubernetes manifest security |

| Custom detector | Prompt injection and AI-specific vulnerability detection |

| Profile | Use Case | Duration | Scope |

|---|---|---|---|

| quick | Fast feedback during development | ~30s | Lint + basic security |

| standard | Balanced daily development | 5-10 min | All pillars sampled |

| deep | Comprehensive analysis | 20-30 min | Every tool enabled |

| security-focused | Security review | 10-15 min | All 13 security tools + SAST |

| pre-commit | Git hook | ~30s | Changed files only |

| ci-pipeline | CI/CD integration | 5-10 min | Parallel execution, gate output |

| pre-release | Before deployment | 20-30 min | Full audit with sign-off |

| compliance | Regulatory audit | 15-20 min | SOC2/ISO/NIST mapping |

| performance | Performance review | 10 min | Performance pillar + Lighthouse |

| supply-chain | Supply chain audit | 5-10 min | SBOM + signatures + provenance |

| custom | User-defined | Varies | Select specific tools |

| full-audit | Everything, no exceptions | 30+ min | All 67 tools |

Raw tool output contains 70-90% false positives. The enrichment pipeline reduces this to under 5%:

| Stage | Action | FP Reduction |

|---|---|---|

| 1. Static Analysis | Collect raw findings from all tools | Baseline |

| 2. Framework-Aware Suppression | Filter based on framework conventions (e.g., Django ORM isn't SQL injection) | ~40% removed |

| 3. Reachability Analysis | Is the vulnerable code reachable from entry points? | ~20% more removed |

| 4. Dataflow Tracing | Can tainted data actually flow to the vulnerable sink? | ~15% more removed |

| 5. Exploitability Scoring | CVSS-adjusted scoring based on deployment context | Prioritization |

| 6. LLM-Assisted Verification | AI reviews remaining findings for final false positive elimination | ~10% more removed |

After all 6 stages, the remaining findings are high-confidence, actionable issues with context-aware severity scores.

Each scan produces a composite score from 0 to 1000:

Native Claude Code integration. Scan, query results, and review findings through natural language.

Programmatic access for CI/CD pipelines, custom dashboards, and automation workflows.

Automated scan pipelines triggered by git push, PR creation, or scheduled intervals.

Build a unified code assurance platform with these components:

1. TOOL ORCHESTRATOR:

- Manage 67 tools across 6 pillars: Code Quality (18), Security (13),

Testing (8), Performance (6), Supply Chain (8), Policy/Reporting (14)

- Parallel execution within pillars, sequential across pipeline stages

- Unified finding format normalizing output from all tools

- Docker-based tool isolation for reproducible environments

2. SCAN PROFILES (12):

- quick (30s): lint + basic security, changed files only

- standard (5-10min): all pillars sampled

- deep (20-30min): every tool enabled

- security-focused: all 13 security tools + SAST + DAST

- pre-commit: git hook, changed files only

- ci-pipeline: parallel, gate output format

- pre-release: full audit with sign-off

- compliance: SOC2/ISO/NIST mapping

- performance: Lighthouse + profiling

- supply-chain: SBOM + signatures + provenance

- custom: user-selected tools

- full-audit: all 67 tools, no exceptions

3. FINDING ENRICHMENT PIPELINE (6 stages):

- Stage 1: Collect raw findings from tool runs

- Stage 2: Framework-aware suppression (Django/Rails/Express patterns)

- Stage 3: Reachability analysis (entry point → vulnerable code path)

- Stage 4: Dataflow tracing (tainted source → vulnerable sink)

- Stage 5: Exploitability scoring (CVSS + deployment context)

- Stage 6: LLM-assisted verification (AI false positive review)

4. QUALITY SCORING:

- 1000-point scale with sqrt penalty curve

- Weighted pillar scores based on profile emphasis

- 15 bonus categories (test coverage, type safety, docs, etc.)

- Historical trend tracking with regression alerts

5. CRYPTOGRAPHIC ATTESTATION:

- Ed25519 key generation and scan result signing

- Rekor transparency log integration

- SLSA Level 3 provenance generation

- Verification CLI for audit teams

6. REPORTING:

- 6 output formats: PDF, HTML, JSON, SARIF, CSV, executive summary

- Compliance mapping (SOC2, ISO 27001, NIST)

- CI/CD gate pass/fail with configurable thresholds

- Slack/webhook notifications

7. INTEGRATION:

- MCP server for Claude Code native access

- REST API for programmatic use

- n8n workflow templates for automation

- Claude Code skill for natural language interface

Build as a Docker-based platform with tool containers, a central orchestrator service, and integration endpoints.Running tools individually produces duplicate findings, inconsistent severity ratings, and format fragmentation. A unified platform normalizes output, deduplicates findings, and provides a single quality score. The cost is platform complexity — worth it for the 95% false positive reduction.

Raw tool output is ~70-90% false positives. Each enrichment stage progressively eliminates noise: framework suppression, reachability, dataflow, exploitability, and finally LLM verification. The result is actionable findings, not alert fatigue.

A linear penalty treats 10 low-severity issues the same as 1 critical issue. Square root penalization matches real-world impact: critical issues dominate the score, while accumulations of minor issues create proportional but not catastrophic penalties.

Smaller keys (32 bytes vs 2048+ bits), faster signing, faster verification, and stronger security assumptions. Ed25519 is the modern standard for cryptographic signatures.

Each tool runs in its own container. This ensures reproducible environments (same tool version, same dependencies), prevents tool conflicts, and enables parallel execution across all 6 pillars.

12 predefined profiles cover common scenarios without requiring tool-by-tool configuration. The custom profile provides escape-hatch flexibility. Profiles are the fast path; raw config is the power-user path.

Governance policy can require scan profiles for specific conductor tiers. STANDARD-tier builds might require the standard profile; MAJOR-tier requires pre-release or full-audit.

Scan results, quality scores, and finding patterns are stored in vector memory. Future scans recall similar past findings for trend analysis and pattern recognition.

The conductor triggers scans as part of STANDARD/MAJOR workflows. The QA and security review agents use scan results as input for their gate evaluations.

The platform exposes a Claude Code skill and MCP server, both installable as plugin components. PreToolUse hooks can trigger pre-commit scans before code is written.